More on Evaluating Classifiers

Spring 2026

Department of Computer Science

Middlebury College

There are lots of questions to ask when we evaluate classfiers beyond some quantifiable metric.

What is the task that the classifier is supposed to perform?

Does the classifier need to be complicated to achieve its task?

Is the task that the classifier is intended to perform actually possible?

What is the task that the classifier is supposed to perform?

What is the task that the classifier is supposed to perform?

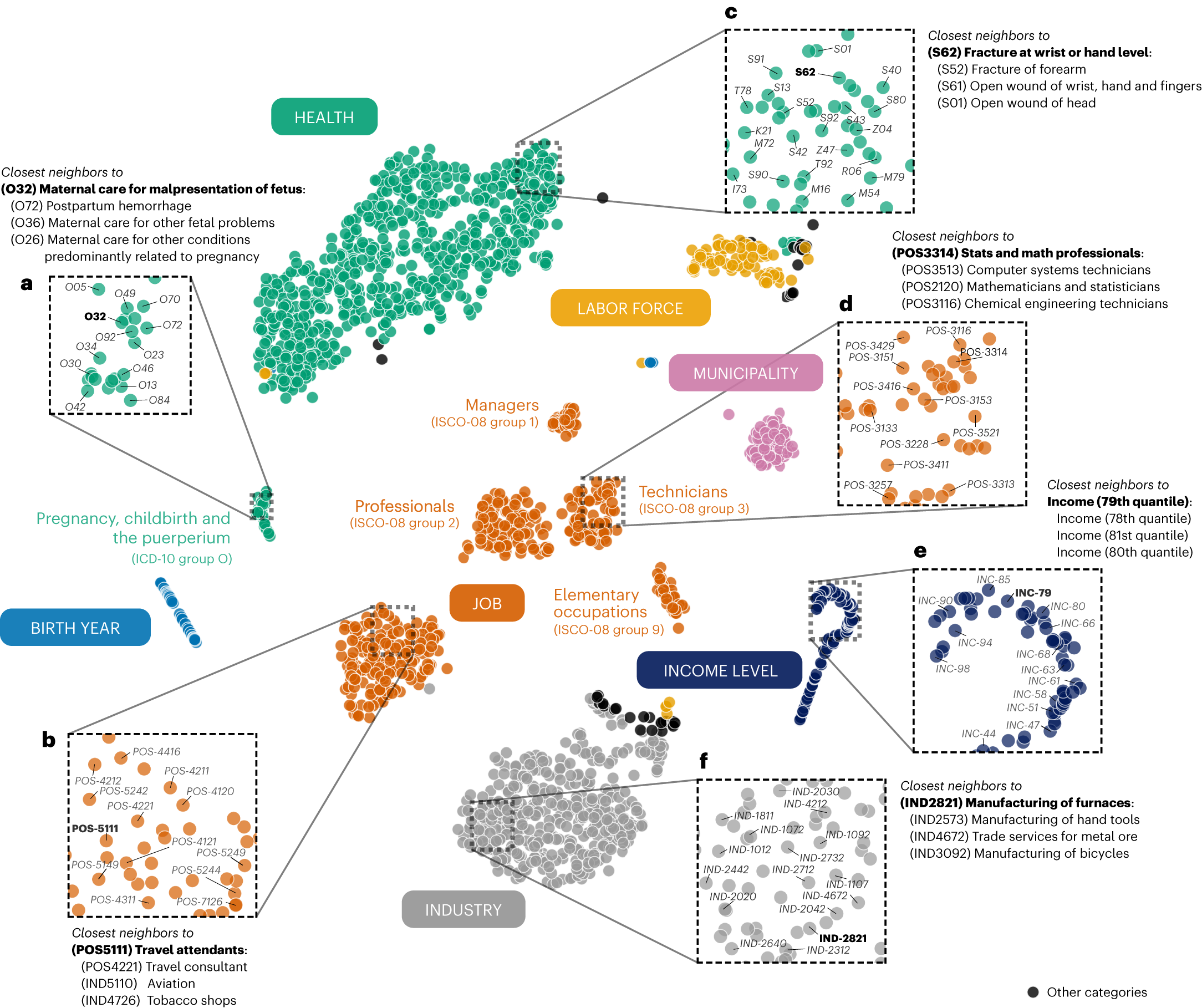

Researchers analyzed aspects of a person’s life story between 2008 and 2016, with the model seeking patterns in the data. Then, they used the algorithm to determine whether someone had died by 2020. The Life2vec model made predictions with 78% accuracy.

- Media writeup of Savicsens et al, 2024. “Using Sequences of Life-Events to Predict Human Lives.” Nature Computational Science 4 (1): 43–56.

…They used the algorithm to determine whether someone had died [between 2016 and 2020]. The Life2vec model made predictions with 78% accuracy.

What do you wonder?

From the authors:

Because our cohort is very young, almost everyone survives (more than 95%).

This means that if we created an algorithm that always predicted “survive”, it would get a very high accuracy (over 95%).

To address the issue, we balance the dataset, equivalent of 50,000 with survive outcome and 50,000 with death outcome. In this balanced dataset a random guess would get 50% accuracy.

When we run our algorithm on that balanced dataset, we get 78.8% accuracy.

Does the classifier need to be complicated to achieve its task?

Task: determine whether a given high school student is at risk of dropping out of school in the future.

Personalized Predictions

Since 2012, Wisconsin school administrators like Brown have received their first impression of new students from the Dropout Early Warning System, an ensemble of machine learning algorithms that use historical data — such as students’ test scores, disciplinary records, free or reduced-price lunch status, and race — to predict how likely each sixth through ninth grader in the state is to graduate from high school on time.

But after a decade of use and millions of predictions, The Markup has found that DEWS may be incorrectly and negatively influencing how educators perceive students, particularly students of color.

Do Predictions Need To Be Personalized?

- Individual features: demographics, family background, education history, etc.

- Environmental features:

- School-level: funding, teacher quality, class sizes, average test scores, dropout rates, etc.

- Neighborhood-level: poverty rates, crime rates, district-wide level drop rates, etc.

There are costs to using individual features: privacy concerns, data collection costs, encouragement of various forms of bias, etc. etc.

Perdomo et al. (2025) construct a model \(\mathcal{M}_\text{individual}\) that uses individualized features, and a model \(\mathcal{M}_\text{environmental}\) that uses only environmental features.

Our analysis shows that if we already know these environmental features, incorporating individual features into the predictive model only leads to a slight, marginal improvement in identifying future dropouts…That is, intervening on students identified as being at high risk by this alternative, environmental-based targeting strategy would have the same aggregate effect on high school graduation rates in Wisconsin as the individually-focused DEWS predictions.

- Perdomo et al. (2025)

Is the task that the classifier is intended to perform actually possible?

Is the task that the classifier is intended to perform actually possible?

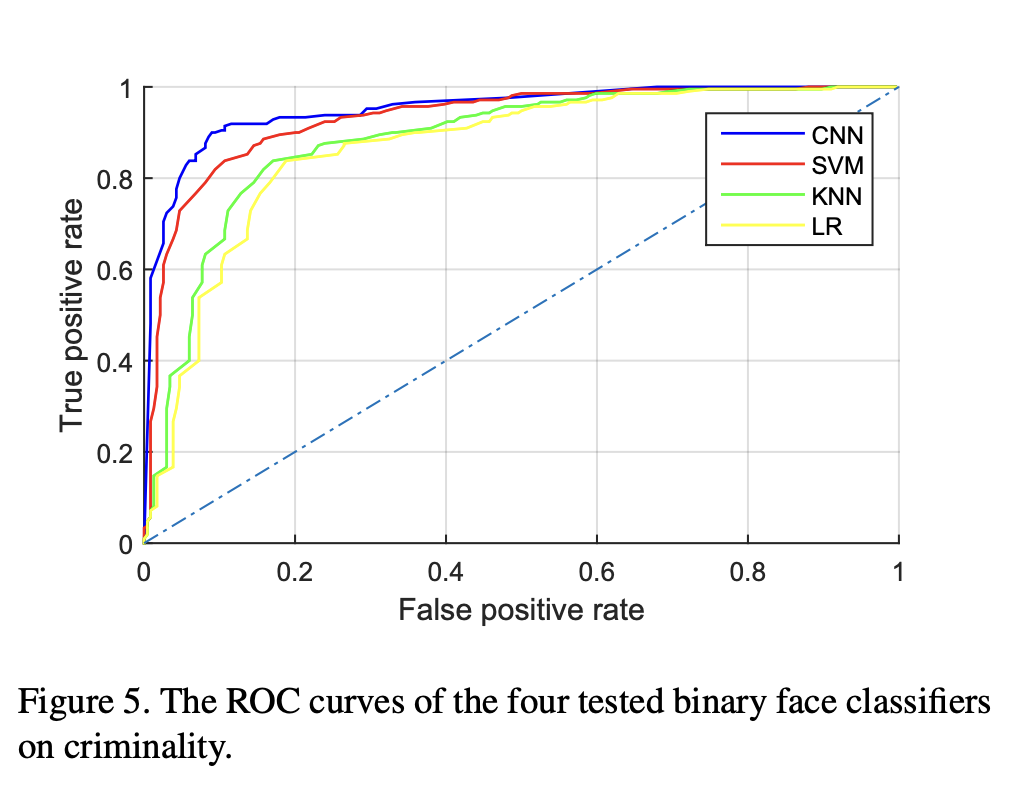

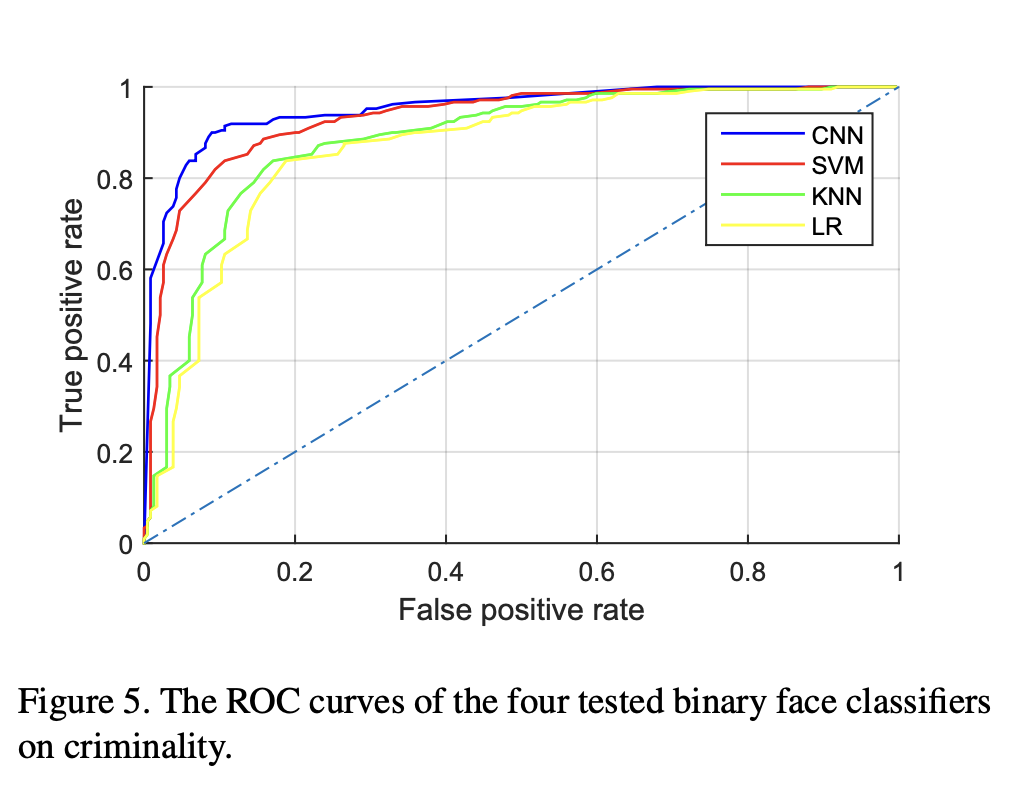

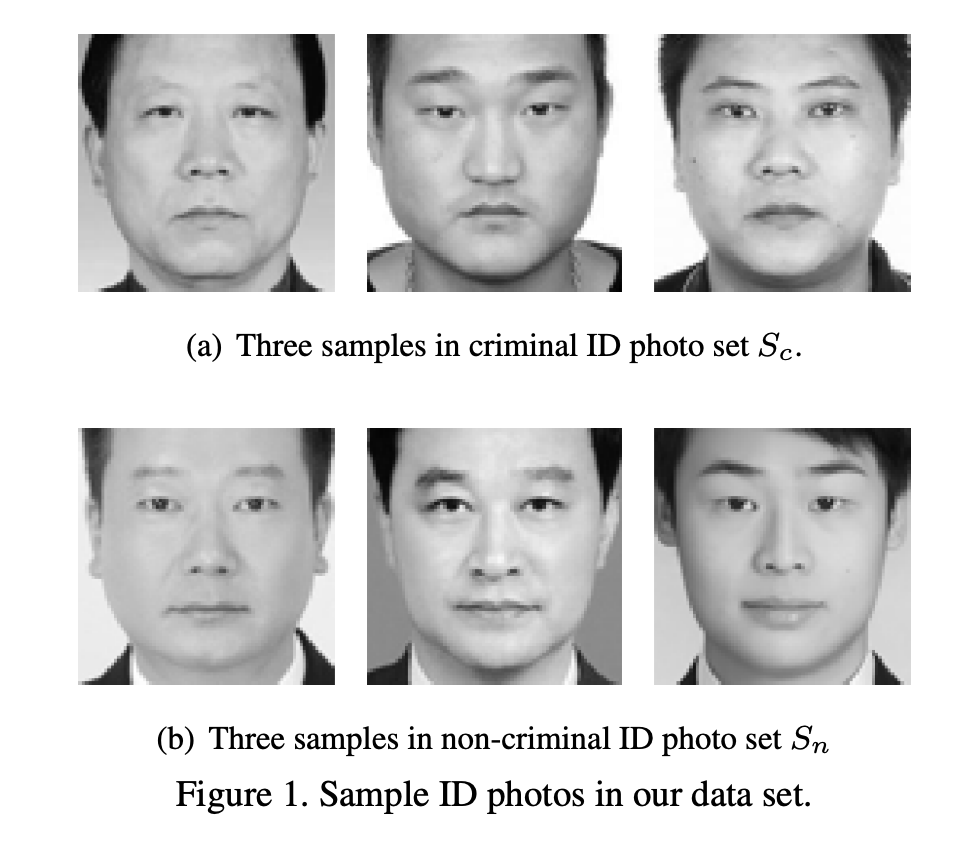

We study, for the first time, automated inference on criminality based solely on still face images, which is free of any biases of subjective judgments of human observers…

All four classifiers perform consistently well and empirically establish the validity of automated face-induced inference on criminality, despite the historical controversy surrounding this line of enquiry.

Wu + Zhang, 2016: “Automated Inference on Criminality using Face Images,” arXiv preprint

What do you notice? What do you wonder?

Always ask about the data…

Subset \(S_c\) contains ID photos of 730 criminals… published as wanted suspects by the ministry of public security of China and by the departments of public security for the provinces of Guangdong, Jiangsu, Liaoning, etc.; the others are provided by a city police department …

Subset \(S_n\) contains ID photos of 1,126 non-criminals that are acquired from Internet using the web spider tool; …including waiters, construction workers, taxi and truck drivers, real estate agents, doctors, lawyers and professors….

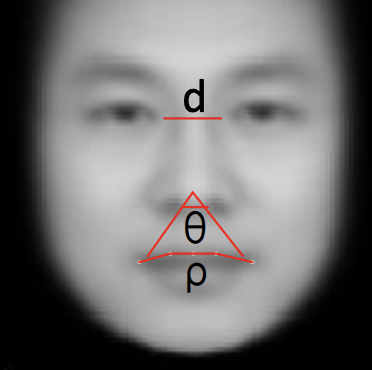

Informative features, according to the researchers

“In conclusion…”

Ask careful questions about predictive machine learning:

- What’s the task on which we are evaluating success?

- Could I achieve the same success with a simpler, less trendy model?

- Do these results pass the sniff test?