Introducing Classification

In this warmup problem, you’ll compute the gradient of the function \(q(\mathbf{x})\) which we’ll use as the signal function for classification in the logistic regression model. As with regression, we’ll use this gradient for training the model.

Part A

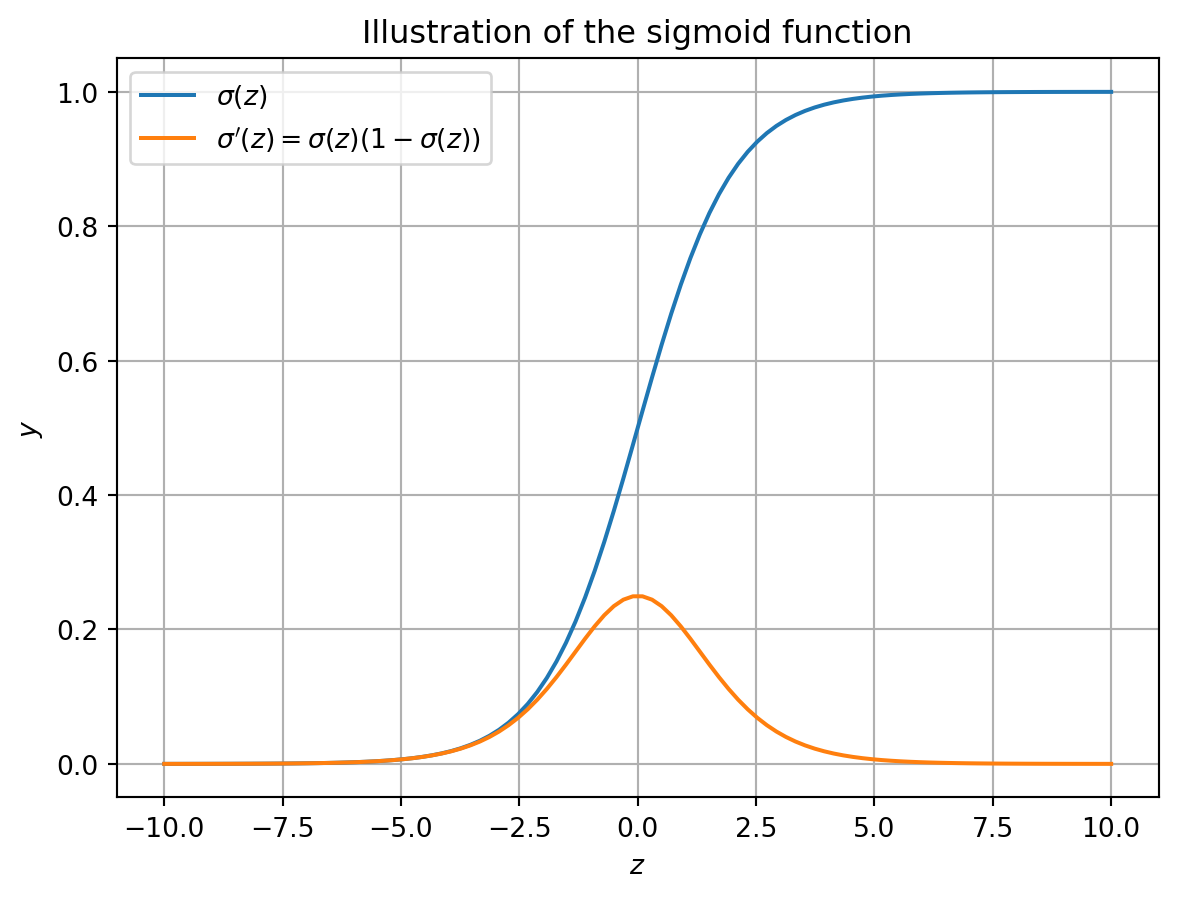

Let \(\sigma:\mathbb{R}\rightarrow (0,1)\) be the logistic sigmoid function, defined by \(\sigma(z) = \frac{1}{1 + e^{-z}}\).

Prove by calculation that for any \(z\in \mathbb{R}\), we have

\[ \begin{aligned} \frac{d}{dz}\sigma(z) = \sigma(z)(1 - \sigma(z))\;. \end{aligned} \]

Part B

Let \(q:\mathbb{R}^d \rightarrow (0,1)\) be the function defined by \(q(\mathbf{x}) = \sigma(\mathbf{w}^\top \mathbf{x})\) for some \(\mathbf{w}\in \mathbb{R}^d\). Compute the gradient \(\nabla q(\mathbf{x})\), with the partial derivatives taken with respect to the entries of \(\mathbf{w}\). Please express your final answer in vectorized form, i.e. as a function of \(\mathbf{w}\), \(\mathbf{x}\), and \(q(\mathbf{x})\), without any summations or explicit indices.

Hint: Start by computing \(\partial q(\mathbf{x})/\partial w_i\) for an arbitrary \(i\in \{1,2,\ldots,d\}\), and then use the result to write down the full gradient.